The Art of the Algorithm: A Human-Centric, Step-by-Step Guide to AI Image Creation

Step-by-Step Guide to AI Image Creation honestly made me a skeptic when I first heard about it. Another tech buzzword, another fleeting trend, I thought, probably just churning out generic, soulless images that lacked any real artistic merit. I’ve spent years working in creative fields, observing the constant flux of new tools and techniques, and my initial reaction to generative AI was one of cautious disbelief. But then I started playing. I dipped my toes into a nascent text-to-image AI platform, inputting a simple, almost whimsical prompt, and what came back wasn’t just a generic image; it was a spark. It wasn’t perfect, far from it, but it was something. And that something quickly became an incredible, mind-bending extension of my own creativity. It’s like having a hyper-talented, impossibly fast digital assistant who can paint, sculpt, photograph, and even animate based on your wildest ideas – if you know how to talk to it.

Over the past couple of years, my journey into the Step-by-Step Guide to AI Image Creation has involved countless hours navigating its fascinating, sometimes frustrating, but ultimately rewarding world. I’ve witnessed the tools evolve at breakneck speed, from rudimentary outputs characterized by distorted limbs and nonsensical text to jaw-dropping, photorealistic, or deeply artistic pieces that challenge our very definitions of art. And what I’ve learned is this: it’s not about being a coder or even a traditional artist with decades of brush-on-canvas experience; it’s about being a storyteller, a visionary, and a diligent prompt engineer. This guide is for anyone curious about unlocking that potential. We’ll explore the core concepts, AI art generators, and AI image creation techniques that transform simple words into complex visuals.

If you’re curious about transforming your thoughts into stunning visuals – whether for personal projects, professional concept art, marketing, or just for the sheer joy of experimentation – you’re in the right place. This isn’t a technical deep dive into neural networks or complex machine learning algorithms; it’s a practical, step-by-step guide from someone who’s wrestled with prompts, celebrated breathtaking generations, and ultimately fallen in love with this new creative medium. Consider this your roadmap to becoming an adept AI image creator, navigating the exciting frontier of digital art. We’ll cover everything from picking your first tool to understanding the nuances of prompt writing and the critical ethical considerations in this burgeoning field.

Step 1: Choosing Your Canvas – Picking the Right AI Tool

Choosing the right AI tool is your first and arguably most foundational step. Before you can paint, you need your brushes and canvas, and the AI image generation landscape is bustling with new platforms emerging constantly. Each has its own personality, strengths, and quirks. Think of them as different art studios, each with a unique style, a particular set of tools, and a distinct aesthetic philosophy. Understanding these differences is key to matching the right tool to your creative vision.

The Big Players: Your Main Options for AI Image Generation

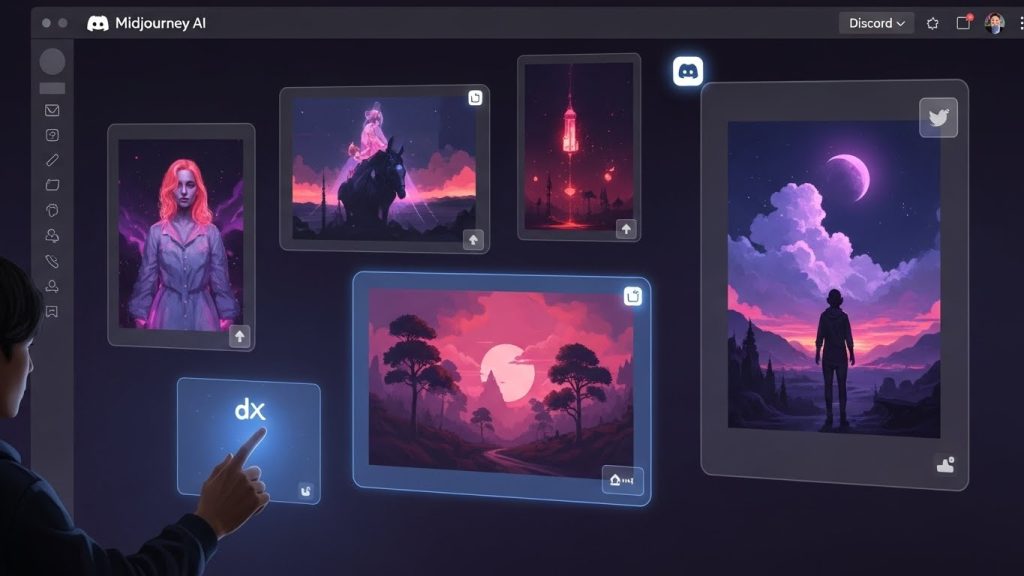

- Midjourney: The Artistic Visionary

If you prioritize aesthetic beauty, evocative moods, and a distinctly artistic flair, Midjourney AI is often the top recommendation. It excels at creating visually cohesive, often dreamy, painterly, or cinematic images. It’s less about achieving absolute, unblemished photorealism (though it’s getting remarkably good at it) and more about generating high-quality, inspiring generative art. Midjourney primarily operates through a Discord interface, which can initially feel a bit unconventional if you’re not a regular Discord user. However, its command structure (/imagine) and options for refinement are surprisingly intuitive once you get the hang of it. - DALL-E 3 (via ChatGPT Plus or Microsoft Designer/Copilot): The Language Interpreter

DALL-E, especially its latest iteration, DALL-E 3, stands out as a master of natural language understanding (NLU). If you have a very specific, verbose idea with multiple elements, complex relationships, and nuanced instructions, DALL-E 3 is remarkably good at interpreting and translating it accurately. Its integration with ChatGPT makes the DALL-E 3 prompting process incredibly conversational. You can literally chat with the AI, refine your request naturally, and even ask it to brainstorm ideas for you. It’s also excellent for integrating text into images, a notoriously tricky feat for many AI image generators.- Strengths: Outstanding prompt adherence, strong contextual understanding, great for complex scenes, integrates text well, ethical training data (reduces copyright concerns for commercial use), seamlessly part of the ChatGPT Plus ecosystem. Also available for free through Microsoft Designer and Copilot.

- Workflow: Typically conversational; you describe your vision in plain English, and ChatGPT translates it into optimized prompts for DALL-E 3.

- Ideal for: Storytellers, content creators, marketers, and anyone needing precise control over specific elements in an image, AI photo generation, and text-to-image AI with complex instructions.

- Stable Diffusion (and its myriad interfaces like Leonardo.ai, NightCafe, Automatic1111): The Open-Source Powerhouse

Stable Diffusion is the open-source champion, offering unparalleled flexibility and control. If you’re someone who loves to tinker, customize, and push the boundaries, this is your playground. While you can run Stable Diffusion locally on your own powerful computer (using interfaces like Automatic1111 or ComfyUI), web-based platforms like Leonardo.ai or NightCafe provide a more user-friendly entry point, offering access to various fine-tuned models (often called “checkpoints” or “LoRAs”) tailored for specific aesthetics – think photorealism, anime, specific illustration styles, and more.- Strengths: Ultimate customization (custom models, LoRAs), community-driven innovation, deep control over parameters (e.g., ControlNet for pose/composition), often free to use locally (after initial setup), vast ecosystem of plugins and extensions. Excellent for custom AI models and open-source AI art.

- Workflow: Can be complex locally, involving installations and command-line knowledge, but web interfaces simplify it. Prompts often include detailed style and quality descriptors.

- Ideal for: Advanced hobbyists, professional artists seeking granular control, developers, those with specific stylistic needs, and anyone interested in Stable Diffusion guides and pushing technical boundaries.

- Adobe Firefly: The Professional Integrator

Emerging from a company long synonymous with creative software, Adobe Firefly is designed to integrate AI image generation directly into professional workflows. Its primary appeal lies in its commitment to ethical training data (largely Adobe Stock content), making it a safer bet for commercial use in terms of potential copyright claims. It’s often simpler to use than Stable Diffusion, focusing on intuitive features like text-to-image, text effects (applying textures to text), and incredibly powerful generative fill/expand capabilities within applications like Photoshop.- Strengths: Ethically sourced training data, seamless integration with Adobe Creative Cloud apps, user-friendly interface, strong generative fill and text effects, good for AI for graphic design.

- Workflow: Web-based for standalone use, but truly shines when used within Photoshop or Illustrator for targeted image manipulation.

- Ideal for: Graphic designers, photographers, marketing professionals, and anyone who needs a reliable and ethically sound Adobe Firefly tutorial for creative projects.

Beyond the Big Four: Exploring Other Tools

To truly paint a comprehensive picture of AI art generators, it’s worth briefly mentioning a few other notable platforms:

- Ideogram.ai excels at generating accurate text within images, a common weakness for many AI models. Great for logos, posters, or any image where specific words are crucial.

- Playground AI: Offers a generous free tier with multiple models (including Stable Diffusion) and a straightforward interface, making it an excellent starting point for beginners.

- Bing Image Creator (Microsoft Copilot): A free, accessible way to use DALL-E 3, directly integrated into Microsoft’s ecosystem. Great for casual users.

My Personal Take: I use them all, depending on the specific project and the desired outcome. For quick, beautiful concepts or mood boards, I often lean on Midjourney. For intricate, multi-faceted scenes with complex relationships or when I need precise text integration, DALL-E 3 (via ChatGPT) is my go-to. When I need absolute granular control, specific art styles through custom models, or want to explore advanced techniques like ControlNet, I’m deep in Stable Diffusion land, usually through a web-based platform like Leonardo.ai for ease of use, or a local setup for very intensive, private work. Each tool is a different brush in my creative arsenal.

Most platforms offer free trials or tiers, so my best advice is to experiment. Jump in, generate a few images with each, and see which interface, aesthetic, and overall results resonate most with your creative flow. Don’t commit to one until you’ve felt the unique rhythm of a few.

Step 2: The Art of the Prompt – Your Creative Command

The art of the prompt is where the magic truly happens, and frankly, where most people either get frustrated or become utterly addicted. Prompt engineering isn’t just typing words; it’s learning to communicate with an alien intelligence, a bit like teaching a child a new, complex concept by breaking it down into understandable pieces. It’s a new form of linguistic artistry, where precision, evocative language, and an understanding of how the AI “thinks” are paramount. This is the heart of text-to-image prompting.

Think of your prompt as a director’s brief to a film crew, a detailed specification to an architect, or a recipe for a master chef. The more clearly, specifically, and evocatively you describe your vision, the better the AI can execute it. A vague prompt will yield vague, often unsatisfying results. A well-crafted prompt, however, can unleash astonishing creations.

The Anatomy of a Powerful AI Art Prompt

A truly effective AI art prompt is often a carefully constructed sentence (or series of phrases) that guides the AI toward your desired output. Here’s a breakdown of the key elements, often used in conjunction:

- 1. The Subject: This is the core of your image. Be incredibly specific.

- Weak: “A dog.” (You’ll get a generic dog)

- Better: “A golden retriever.” (More specific)

- Even Better: “A fluffy golden retriever puppy, with bright, curious eyes, looking playful.” (Adds key characteristics and emotion)

- Consider: age, gender, species, color, material, specific clothing, distinguishing features.

- 2. The Environment/Setting: Where is the subject? What surrounds it?

- Adding to the above: “A fluffy golden retriever puppy, with bright, curious eyes, looking playful, chasing butterflies in a sun-dappled meadow filled with wildflowers.” (Adds location, specific elements, and lighting implication)

- Consider: time of day, weather, geographical features (mountains, ocean), architectural style (Victorian street, futuristic cityscape), interior details (cozy library, bustling market).

- 3. The Action/Interaction: What is happening? How does the subject interact with its environment or other elements?

- Adding to the above: “A fluffy golden retriever puppy, with bright, curious eyes, playfully leaping through a sun-dappled meadow filled with wildflowers, trying to catch a shimmering blue butterfly.” (Adds dynamic action and a specific interaction)

- Consider: specific verbs, relationships between multiple subjects, and implied movement.

- 4. The Artistic Style/Medium: This is crucial for guiding the aesthetic. It can transform the exact same subject into vastly different images.

- Common examples: “oil painting,” “watercolor,” “charcoal sketch,” “digital art,” “vector illustration,” “pixel art,” “photography,” “3D render.”

- Art Movements/Genres: “in the style of Van Gogh,” “impressionistic,” “surrealist,” “cyberpunk art,” “steampunk,” “gothic,” “art nouveau,” “anime style,” “sci-fi.”

- Rendering Engines: “Unreal Engine 5,” “Octane Render,” “V-Ray.” These imply a certain level of photorealism and detail.

- Adding to the above: “A fluffy golden retriever puppy, with bright, curious eyes, playfully leaping through a sun-dappled meadow filled with wildflowers, trying to catch a shimmering blue butterfly, painted in the vibrant, expressive style of an Impressionist landscape, with thick impasto brushstrokes.“

- 5. The Lighting and Atmosphere/Mood: How is the scene lit? What feeling do you want to evoke?

- Lighting: “cinematic lighting,” “dramatic volumetric light,” “soft diffused light,” “neon glow,” “golden hour,” “blue hour,” “chiaroscuro,” “rim light.”

- Atmosphere/Mood: “ethereal,” “gritty,” “serene,” “chaotic,” “mysterious,” “joyful,” “dystopian,” “whimsical.”

- Adding to the above: “…painted in the vibrant, expressive style of an Impressionist landscape, with thick impasto brushstrokes, bathed in warm, golden hour sunlight, creating long shadows and a joyful, serene atmosphere.“

- 6. Camera/Composition Details (for photographic styles): If you’re aiming for realism, these terms are invaluable.

- Examples: “close-up,” “wide shot,” “full body shot,” “portrait,” “dutch angle,” “rule of thirds,” “depth of field,” “bokeh,” “85mm lens,” “macro shot,” “anamorphic lens,” “HDR.”

- Adding to the above (if aiming for photo-realism): “…playfully leaping through a sun-dappled meadow filled with wildflowers, trying to catch a shimmering blue butterfly, photorealistic, captured with an 85mm prime lens, shallow depth of field, natural light, eye-level perspective.“

- 7. Quality Boosters & Specific Keywords: Phrases that nudge the AI towards higher quality.

- Examples: “ultra detailed,” “masterpiece,” “award-winning photograph,” “highly intricate,” “8k,” “4k,” “cinematic,” “vivid colors,” “sharp focus.”

Platform-Specific Parameters: The Fine-Tuning Dials

Many platforms allow additional parameters, often appended to the end of the prompt or entered into separate fields:

- Aspect Ratio (–ar in Midjourney, or separate settings elsewhere): Controls the image dimensions. E.g., –ar 16:9 (widescreen), –ar 9:16 (portrait), –ar 3:2.

- Negative Prompts (–no in Midjourney, or separate negative prompt box): These are crucial for telling the AI what not to include. Common examples: –no blurry, deformed, text, watermark, extra limbs, bad anatomy, ugly.

- Stylize (–s in Midjourney): Controls the artistic stylization applied by Midjourney. Higher values make it more artistic, lower values adhere more strictly to the prompt.

- Chaos (–c in Midjourney): Introduces more variation in the initial grid of images.

- Seed Value: An important numerical value that determines the initial noise pattern. Knowing a seed allows you to regenerate very similar images or create controlled variations.

Let’s try a simple idea and evolve it through “how to write prompts”:

- Initial thought: “A castle.”

- First prompt attempt: A castle. (You’ll get a castle, but it’ll be generic, probably not what you envisioned).

- Adding detail: A medieval castle on a hill. (Better, more context, but still quite broad).

- Adding style & mood: A majestic medieval castle perched atop a craggy hill, surrounded by a swirling mist, gothic architecture, dramatic lighting, fantasy art, volumetric light. (Now we’re talking! This defines a specific genre and atmosphere.)

- Refining and adding specifics (a strong example of prompt engineering): A majestic medieval castle, inspired by Neuschwanstein, perched atop a craggy hill at dawn, surrounded by a swirling, ethereal mist, gothic architecture, intricate stone carvings, dramatic cinematic lighting, deep shadows, fantasy art, volumetric light, highly detailed, octane render, 8k. –ar 16:9 (This is getting quite specific, drawing on real-world inspiration, technical rendering terms, and explicit quality boosters. It tells the AI exactly what you want.)

My Expert Tip on Prompting: It’s an iterative dance, a conversation. Start relatively simple, see what the AI gives you, then add, subtract, or rephrase elements. I’ve personally spent hours tweaking a single word or phrase, experimenting with synonyms, or adjusting the order of descriptors to get just the right nuance. If you’re not getting what you want, break your vision into its constituent parts and address each one in your prompt. Don’t be afraid to be verbose, but also learn to be precise. Think of your prompt as your magic spell – the right incantation yields astounding results. The key to the best AI prompts is relentless experimentation and a keen eye for what the AI interprets.

Step 3: Generating and Iterating – The Feedback Loop

Generating and iterating on your AI images is where the initial spark turns into a polished gem. You’ve meticulously crafted your prompt, chosen your tool, and now it’s time to hit that generate button. But the journey doesn’t end with the first output; it’s just beginning. This phase is all about refining, correcting, and pushing the boundaries of what you thought possible. This is where you master AI image creation techniques.

From First Draft to Final Piece: The Iterative Process

- Analyze the Results: The AI will usually give you a few variations (typically 4 in Midjourney, 1-4 in DALL-E, adjustable in Stable Diffusion). Look at them critically.

- What worked well? Did the AI capture the mood, the subject, the style?

- What’s close to your vision but needs a tweak?

- What’s completely off? Why do you think it went wrong? (Often, it’s a prompt ambiguity.

- Pay attention to common AI artifacts, such as strange hands, distorted faces, or nonsensical elements, especially with older models or less refined prompts.

- Variations & Upscaling: Most platforms offer crucial follow-up actions:

- Creating Variations: If you like one of the generated images but want slightly different interpretations or minor adjustments, select the “V” (variation) button. This tells the AI to use that image as a starting point and generate new, similar options. This is essential for fine-tuning.

- Upscaling: Once you have an image you’re happy with, upscale it. The initial generations are often lower resolution. Upscaling enhances the detail, sharpens the edges, and prepares it for practical use. Many tools have built-in upscalers, but dedicated external upscale AI image tools (like Topaz Gigapixel AI or free online alternatives) can provide even better results.

- Refinement: Going Back to the Prompt: This is arguably the most crucial step in achieving high-quality AI image generation. If the images aren’t quite right, go back to your prompt and apply your learnings from the analysis.

- Add Specificity: Did the AI miss a key detail? Add it more explicitly. “A red car” might become “a gleaming cherry-red 1967 Ford Mustang Fastback.”

- Use Negative Prompts: Did it add something you didn’t want (e.g., text, extra limbs, blur)? Explicitly tell the AI _ _no text, blurry, distorted.

- Adjust Style Keywords: Is the style not quite there? Try different style keywords. If “digital art” isn’t sharp enough, try “vector illustration” or “cell-shaded comic.”

- Tweak Mood/Atmosphere: If the mood is off, adjust your atmospheric descriptors. “Gloomy forest” might become “dark, foreboding forest under a heavy, overcast sky, volumetric fog.”

- Weighting (Platform Dependent): In some tools (like Stable Diffusion), you can assign weights to parts of your prompt, making certain elements more dominant. E.g., (cat:1.2) sits on (dog:0.8).

For instance, if my “majestic castle” prompt yielded something too dark and ominous when I wanted ethereal, I might add “bright morning light, sun-kissed turrets, fairytale atmosphere” to the prompt. If the architecture wasn’t Gothic enough, I’d emphasize “intricate Gothic spires, flying buttresses, stained glass windows.” It’s all about continuous feedback – the AI generates, you evaluate, you refine the input. This iterative process is the secret sauce to becoming a proficient AI image creator.

Beyond Basic Generation: Advanced Iteration Strategies

- Seed Values for Consistency: As mentioned, a “seed” value is a crucial number that dictates the initial random noise from which an image is generated. If you find an image you love, saving its seed value (if the platform allows) enables you to reproduce very similar results. This is invaluable for generating a consistent series of images or for making precise, controlled variations. Want to change just the color of a character’s shirt without altering their pose or the background? Use the same seed and modify only that element in the prompt.

- Image-to-Image (Img2Img): This powerful feature, available in many AI image generators, allows you to use an existing image (either one you generated or one you uploaded) as part of your prompt. The AI then uses the image’s composition, colors, or content as a starting point, transforming it in response to your text prompt. This is fantastic for:

- Style Transfer: Take a photo and turn it into a Van Gogh painting.

- Content Transformation: Change a cat into a lion in the same pose.

- Maintaining Consistency: Use a base image to guide the composition of a new generation.

- Inpainting and Outpainting (Generative Fill): These are revolutionary AI image editing techniques.

- Inpainting: You select a specific area of an image and prompt the AI to fill or modify just that selection. Want to change a person’s shirt color? Inpaint it. Want to add a cup to a table? Inpaint it.

- Outpainting (Generative Expand): This allows you to expand the canvas beyond its original borders, and the AI intelligently generates new content that seamlessly blends with the existing image. Need a wider landscape shot? Outpaint it. This is incredibly powerful for extending backgrounds or changing aspect ratios while preserving content. Adobe Firefly and DALL-E (through Photoshop’s Generative Fill) are particularly strong here, redefining what generative fill can do.

- Mixing Models/LoRAs (Stable Diffusion Specific): For Stable Diffusion users, the ability to load and blend different fine-tuned models (checkpoints) or LoRAs (Low-Rank Adaptation models) is an advanced feature. You can combine a photorealistic model with a LoRA trained on a specific character or art style to achieve highly unique results.

This iterative feedback loop, constantly generating, analyzing, and refining, is how you truly learn the language of AI art. It’s a dialogue between your vision and the algorithm’s capabilities, pushing both to create something extraordinary.

Step 4: Advanced Techniques and Workflow Integration

Once you’re comfortable with basic prompting and iterative refinement, a whole new dimension of AI art workflow and advanced control opens up. This is where AI image creation moves from casual experimentation to becoming a serious tool for artists, designers, and innovators.

Mastering Control: ControlNet

For users of Stable Diffusion (and some other platforms adopting similar features), ControlNet is a game-changer. It allows you to feed the AI an auxiliary image that acts as a structural guide, giving you unprecedented control over the generated output. Instead of simply describing a pose, you can show the AI the exact pose you want.

- Pose Control: Upload a stick figure, a line drawing, or a photograph of a person in a specific pose. ControlNet will generate an image that matches that pose precisely, regardless of the text prompt. This is invaluable for character design, comic art, and ensuring consistent actions across multiple images.

- Canny Edge Detection: Provide a simple line drawing, and ControlNet will use those edges as the backbone for the generated image, effectively allowing you to “color in” a sketch with AI.

- Depth Maps: Supply an image with depth information, and the AI will generate a new image respecting the original’s 3D spatial arrangement. This is fantastic for architectural visualization or for scenes that require precise perspective.

- Normal Maps, OpenPose, Segmentation: There are many other ControlNet models, each providing a different vector of control (e.g., lighting direction, object masks).

ControlNet transforms Stable Diffusion from a “prompt-based guesser” to a “guided creator,” making it a powerhouse for advanced AI generation where precise composition is key.

Beyond Static Images: Animation and 3D

While this guide focuses on still images, it’s worth noting that the field is rapidly expanding into motion. Tools are emerging that allow you to:

- Animate Generated Images: Create short video clips or GIF animations from a single AI-generated image.

- Generate Video from Text: Similar to text-to-image, but outputs video directly (e.g., OpenAI’s Sora, RunwayML).

- 3D Assets from Text/Images: Generate 3D models or textures from prompts, integrating AI into concept art for game design and virtual environments.

These capabilities are still relatively nascent for the average user compared to image generation, but they represent the cutting edge of AI art workflow.

Integrating AI into Professional Workflows

AI image creation isn’t just about standalone art pieces; it’s increasingly integrated into professional creative pipelines.

- Concept Art & Brainstorming: Quickly generate hundreds of variations for characters, environments, or objects, drastically speeding up the ideation phase for game developers, filmmakers, and illustrators.

- Marketing & Advertising: Create unique social media graphics, ad campaign visuals, or product mockups with unprecedented speed and customization.

- Graphic Design: Use generative fill for quick mockups, background removal, or adding elements to designs without needing extensive manual work.

- Storytelling & Publishing: Illustrate books, create visual narratives, or develop unique comic book panels.

- Personalization: Generate personalized avatars, custom emojis, or unique digital gifts.

The ability to generate a visual concept in minutes rather than hours or days is transformative. It allows creatives to focus on the higher-level conceptualization and refinement, offloading the labor-intensive initial execution to the AI.

Ethical and Practical Considerations (Because it’s Not Just About Pretty Pictures)

As someone deeply involved in this space, I feel a profound responsibility to address the broader implications of AI image creation. This technology is powerful, and like any powerful tool, its use comes with significant ethical and practical considerations that every AI artist and user must understand.

Copyright and Ownership: The Murky Waters

This is perhaps the most debated and rapidly evolving legal landscape surrounding generative AI.

- The Problem: Most powerful AI models are trained on vast datasets of existing images from the internet, many of which are copyrighted. The question then becomes: If an AI generates an image influenced by millions of copyrighted works, who owns the new image? Is it “derivative” or “transformative”?

- Platform Policies: Generally, if you create an image using an AI tool, the platform’s terms of service state that you own the copyright to the prompt and, often, to the generated image for personal use. However, commercial use can be trickier. Some platforms (like Adobe Firefly) explicitly train on ethically sourced data (e.g., Adobe Stock and public domain images) to mitigate these risks, offering greater assurance for commercial applications.

- The “Human Authorship” Debate: Legal systems often require “human authorship” for copyright protection. The U.S. Copyright Office, for example, has indicated that AI-generated works without significant human creative input may not be copyrightable. This means the skill of prompt engineering and subsequent human editing/refinement is becoming increasingly important for asserting ownership.

- My Advice: For personal projects, enjoy. For commercial projects, err on the side of caution. Always disclose AI assistance if asked, and prioritize platforms that use ethically sourced training data. The legal precedents are still being set, so staying informed is crucial for navigating AI copyright law.

Bias in AI: Mirroring Society’s Flaws

AI models learn from the vast datasets they’re trained on – and these datasets inevitably reflect existing societal biases, inequalities, and stereotypes present in the real world (and on the internet).

- The Manifestation: This can lead to AI generating images that perpetuate stereotypes (e.g., doctors are male, nurses are female; certain professions are associated with specific ethnicities), lack diversity, or even generate harmful content if not properly filtered. For example, a prompt for “a CEO” might disproportionately generate images of white men, simply because that’s what the training data reflected.

- Mitigation: As AI image creators, we have a responsibility to be aware of this inherent bias. Actively prompt for diversity, specify gender, ethnicity, and other characteristics to broaden the AI’s output. Critically examine the results and challenge the biases you observe. This ongoing vigilance is part of being an ethical user of generative AI.

The “Artist” Debate: A New Form of Creativity

Is someone who uses AI an artist? My unequivocal answer, after years of engaging with this medium, is yes.

- A New Medium: The tool may be new, but the creative intent, the vision, the iterative process of refinement, the discerning eye to curate and perfect the output, and the intellectual effort behind the prompt writing are entirely human. It’s akin to the emergence of photography in the 19th century – initially dismissed by traditional painters as a mechanical process, it quickly became recognized as a powerful art form in its own right. Similarly, digital painting, photomanipulation, and music production all faced skepticism before becoming accepted creative practices.

- The Role of the Human: AI art doesn’t replace human artists; it offers them a powerful new medium. The artist’s skill now lies in conceiving, directing, guiding, and interpreting the AI’s output, much as a film director doesn’t physically build every set or perform every role but rather orchestrates the entire creative process. The true skill lies in the dialogue with the machine. This is the future of AI art and creativity.

Misinformation and Deepfakes: The Double-Edged Sword

The power to create highly realistic images (or even video) also carries the responsibility of ethical use and awareness of potential misuse.

- The Danger: Generating convincing fake images, propagating misinformation, or creating “deepfakes” for malicious purposes is a serious concern. This technology can be used to erode trust, spread propaganda, or harass individuals.

- Our Responsibility: As creators and consumers, we have a role in understanding and discussing these dangers. Promoting media literacy, advocating transparent labeling of AI-generated content, and developing tools to detect AI-generated content are crucial steps. As creators, we must ensure our use of AI image generation is always ethical and transparent.

Environmental Impact: The Hidden Cost

While often overlooked in enthusiastic discussions, the training and ongoing operation of large generative AI models consume significant computational resources and, by extension, energy.

- The Scale: Training a state-of-the-art model can consume as much energy as several homes for a year, contributing to carbon emissions.

- Consideration: As the technology becomes more widespread, the cumulative environmental impact is a growing concern. While individual users have limited direct control, awareness can drive demand for more energy-efficient models and data centers, contributing to a more sustainable AI art workflow.

Conclusion: Embrace the Imagination

AI image creation is more than just a novelty or a passing technological fad; it’s a profound shift in how we can visualize, communicate, and even conceptualize ideas. It has democratized art creation, putting powerful visual tools into the hands of anyone with an imagination and a willingness to learn. It’s an ongoing experiment, a partnership between human creativity and algorithmic capability.

The journey won’t always be smooth, believe me. You’ll generate some bizarre, four-fingered monstrosities, some truly ugly images, and some frustratingly close-but-not-quite outputs. You’ll wrestle with the nuances of prompt writing and the seemingly arbitrary whims of the algorithm. But amidst those, you’ll stumble upon moments of pure magic – an image that perfectly captures the scene in your mind, a visual that inspires an entirely new story, or a piece of art that simply takes your breath away.

My own experience has shown me that the most successful AI artists aren’t necessarily the ones with the most technical know-how or the most expensive hardware, but those with the deepest well of imagination, the keenest eye for detail, the patience to experiment, and the critical thinking to iterate effectively. It’s about being a director for an infinitely capable, yet literal-minded, digital artist.

So, jump in, play around, and don’t be afraid to dream big. Engage with the community, learn from others, and share your creations. The future of creativity is here, and your visual imagination is about to get a whole new superpower. The canvas is limitless, and the tools are at your fingertips. What will you create next?